Salus SEO & AI Visibility Audit

Salus is a runtime safety platform that validates and blocks incorrect agent actions before execution. It provides guardrails that intercept tool calls, return structured feedback for self-correction, and offer full visibility into agent interactions with evaluation tools for testing.

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 73% | C |

| AEO (AI Visibility) | 5% | F |

| E-E-A-T | 42% | F |

| Combined | 39% | — |

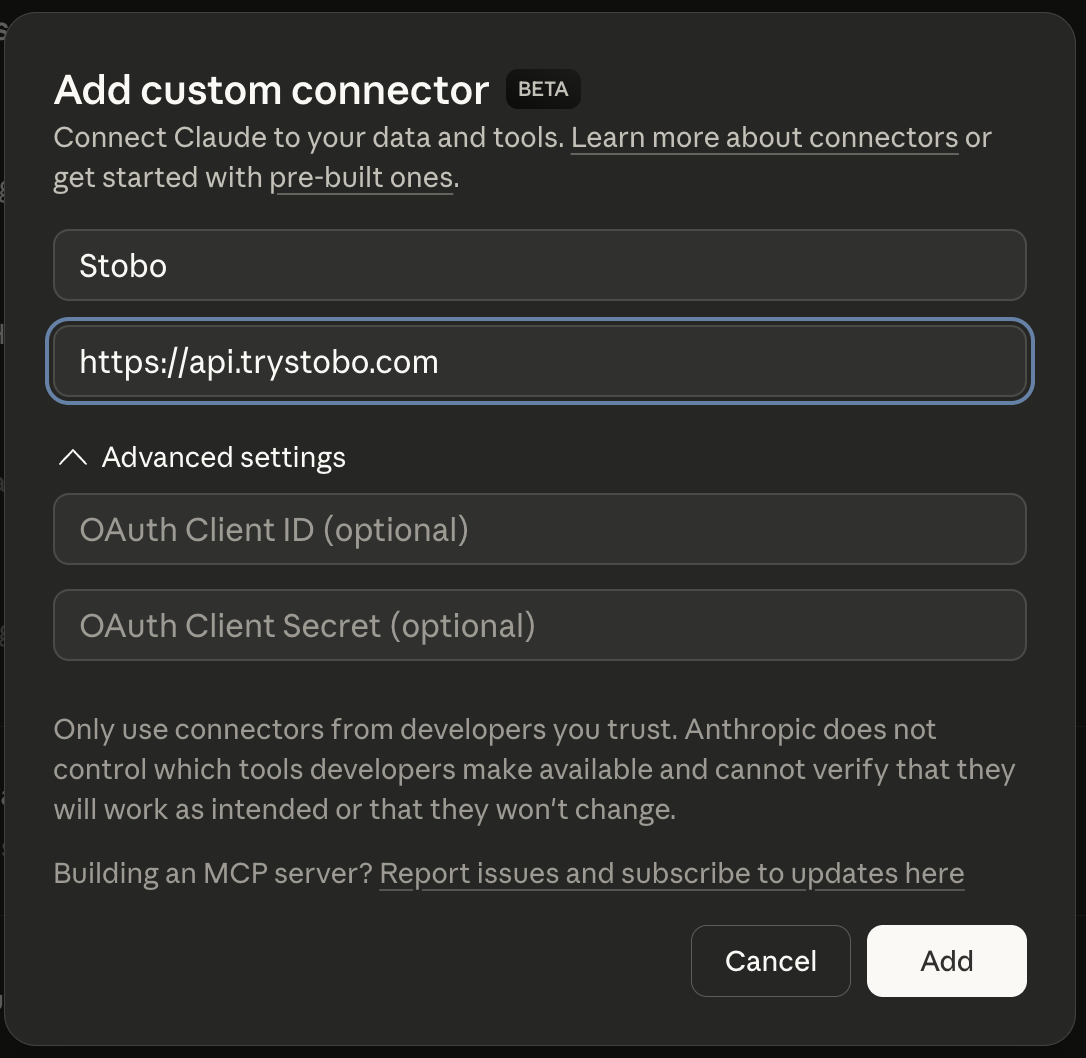

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 53% | No about/team page, social profiles, or LinkedIn links detected, No privacy policy link detected, 6 security headers missing, 1/4 contact signals found |

| Expertise | 60% | 280 words, 9 headings, 4 outbound links |

| Authoritativeness | 20% | No Organization JSON-LD schema found, No JSON-LD schema types detected, No third-party review or trust signals detected |

| Experience | 29% | Found, Freshness issues, Found |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: usesalus.aistobo this article: usesalus.ai/blog/my-articleGenerate a robots.txt for usesalus.aistobo my blog: usesalus.ai/blogRecommendations

-

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for usesalus.ai -

robots.txt not accessible (HTTP 404) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for usesalus.ai -

llms.txt not found (HTTP 404) AEO

Create an llms.txt file so AI models can understand what usesalus.ai does. Stobo generates one automatically.Generate an llms.txt for usesalus.ai

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why can't search engines access my robots.txt file?

- Your robots.txt file returns a 404 error, meaning it's not accessible to search engines. This prevents crawlers from understanding how to index your site properly. You need to create and upload a robots.txt file to your domain root.

- What happens when my site is missing an llms.txt file?

- Your site lacks an llms.txt file, which AI systems use for crawling guidance. This file helps AI tools understand how to interact with your content. Consider adding one to improve AI discoverability and control.

- How do missing date signals hurt my search rankings?

- Your content lacks publication or update dates, making it harder for search engines to assess freshness. This impacts both traditional SEO and AI optimization. Add clear timestamps to show when content was created or last updated.

- Why does content freshness matter for my website's authority?

- Search engines can't determine when your content was published or updated without date signals. This hurts your site's expertise and trustworthiness scores. Clear timestamps help establish content credibility and recency for better rankings.

- How long should my page openings be for better SEO?

- Your page openings are too short at just 12 words, limiting their effectiveness for direct answers in search results. Expand introductions to 50-100 words to better capture featured snippets and AI responses.