Burt SEO & AI Visibility Audit

Burt is an AI platform that builds autonomous teammates for logistics operations. It provides AI agents that automate warehouse tasks, route planning, and supply chain workflows with real-time coordination capabilities that reduce manual work and improve operational efficiency.

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 73% | C |

| AEO (AI Visibility) | 10% | F |

| E-E-A-T | 42% | F |

| Combined | 42% | — |

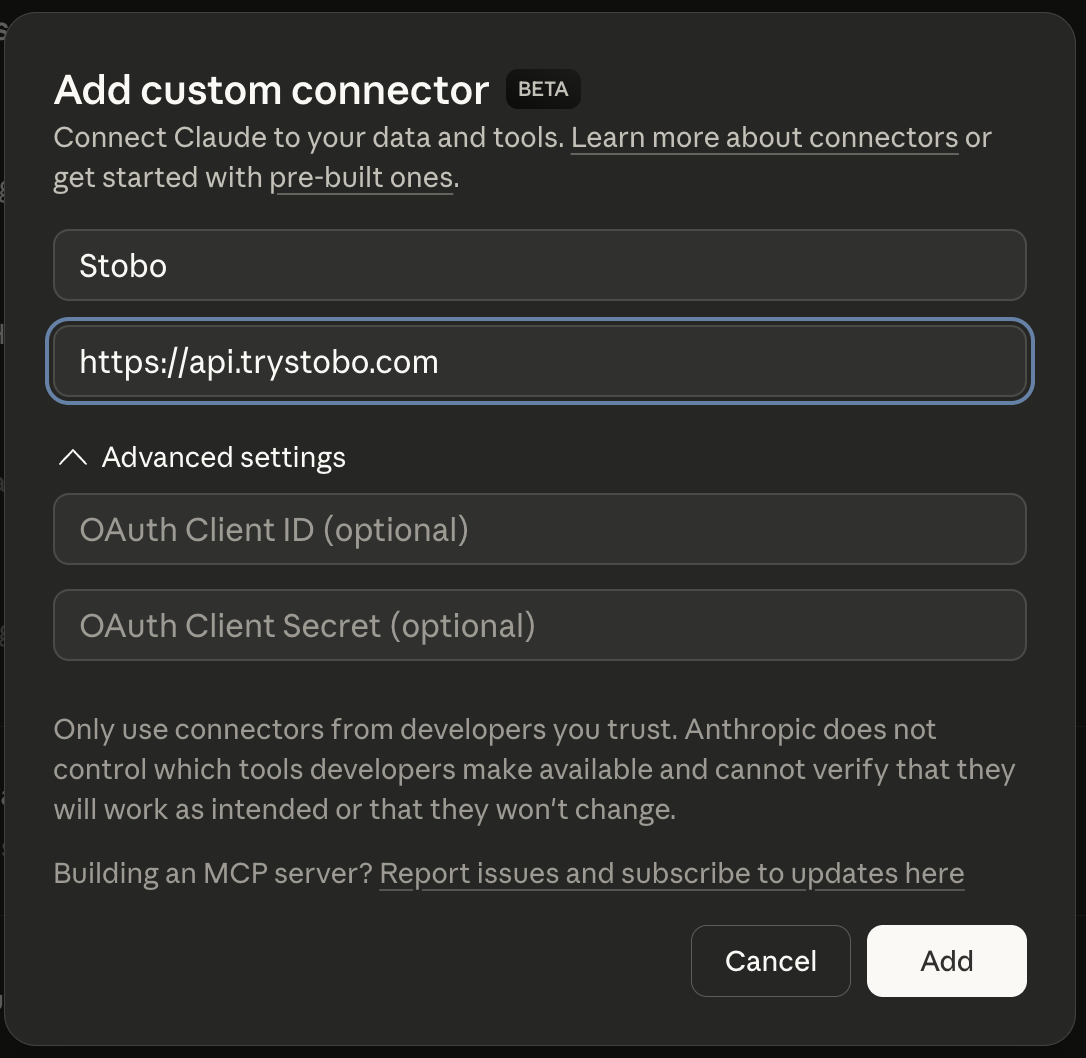

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 60% | Found, 6 security headers missing, No contact transparency signals detected |

| Expertise | 47% | 64 words, 2 headings, 4 outbound links |

| Authoritativeness | 20% | No Organization JSON-LD schema found, No JSON-LD schema types detected, No third-party review or trust signals detected |

| Experience | 21% | No social proof signals detected, Freshness issues, No first-hand experience signals detected |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: trainburt.comstobo this article: trainburt.com/blog/my-articleGenerate a robots.txt for trainburt.comstobo my blog: trainburt.com/blogRecommendations

-

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for trainburt.com -

robots.txt not accessible (HTTP 404) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for trainburt.com -

llms.txt not found (HTTP 404) AEO

Create an llms.txt file so AI models can understand what trainburt.com does. Stobo generates one automatically.Generate an llms.txt for trainburt.com

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why can't search engines find my robots.txt file?

- Your site returns a 404 error when search engines try to access robots.txt. This file tells crawlers which pages to index. Create a robots.txt file and place it in your root directory to fix this critical issue.

- How do missing date signals hurt my search rankings?

- Your content lacks publication and update dates, making it impossible for search engines to assess freshness. Add visible dates to your pages and include proper structured data markup to improve your content's credibility and rankings.

- What is llms.txt and why does my site need it?

- Your site is missing llms.txt, a file that helps AI systems understand your content better. Create this file in your root directory with clear instructions about your content to improve AI-driven search visibility.

- Does fresh content really impact my search performance?

- Yes, content freshness is a ranking factor. Your pages show no date information, which hurts search engine trust. Add publication dates, last modified dates, and regularly update your content to signal freshness to search engines.

- Should I add FAQ schema markup to my website?

- Your site lacks FAQ schema markup, missing opportunities for rich snippets in search results. Add FAQPage structured data to relevant pages with question-answer content to increase visibility and click-through rates from search results.