Skillsync SEO & AI Visibility Audit

Skillsync is a talent discovery platform that identifies skilled engineers through their open source contributions on GitHub. It enables recruiting teams to filter candidates by seniority and expertise, generate personalized outreach emails, and discover overlooked engineering talent based on actual shipped code rather than claims.

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 76% | C |

| AEO (AI Visibility) | 5% | F |

| E-E-A-T | 48% | F |

| Combined | 41% | — |

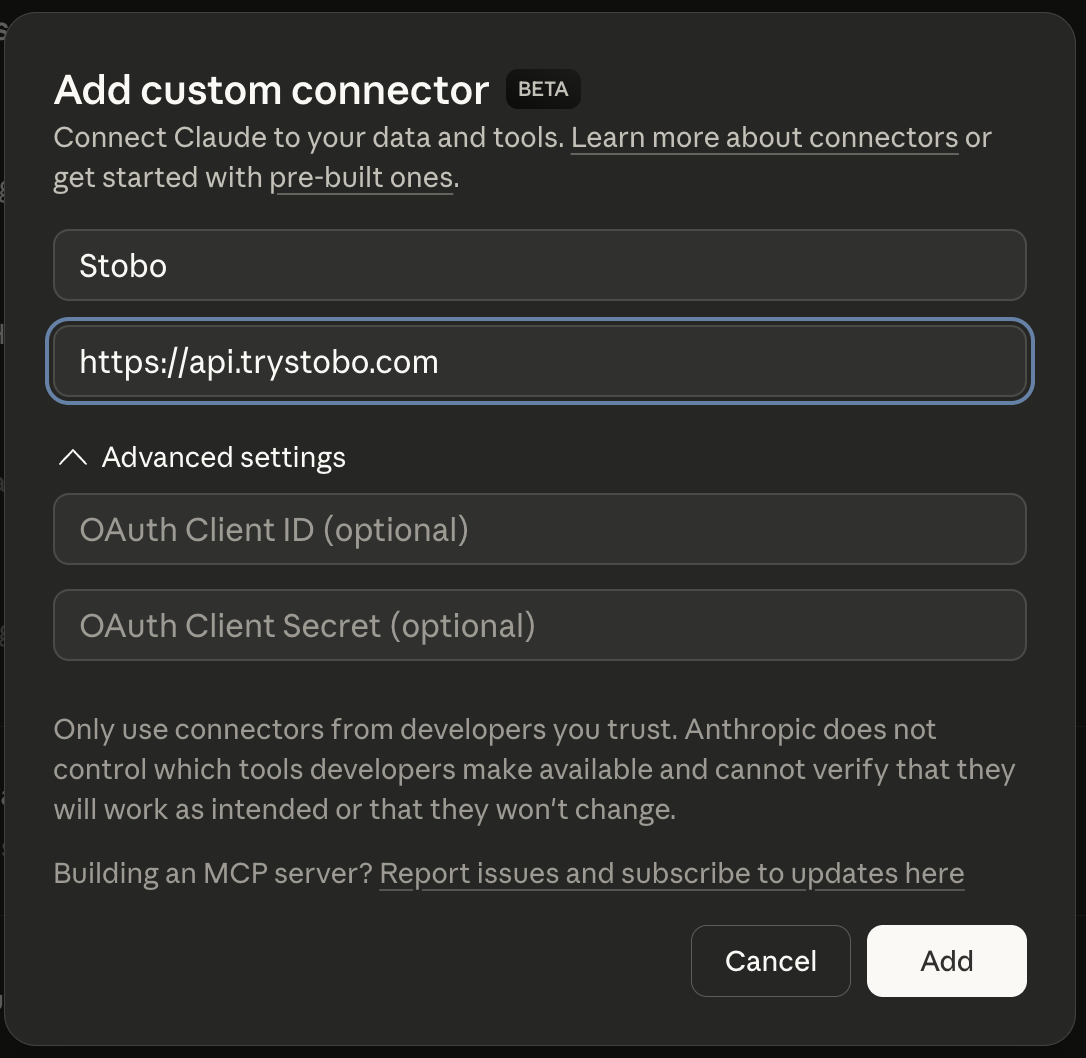

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 53% | Found, No privacy policy link detected, 6 security headers missing, No terms of service link detected |

| Expertise | 67% | All checks passing |

| Authoritativeness | 37% | No Organization JSON-LD schema found, No third-party review or trust signals detected |

| Experience | 26% | Found, Freshness issues, No first-hand experience signals detected |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: skillsync.wikistobo this article: skillsync.wiki/blog/my-articleGenerate a robots.txt for skillsync.wikistobo my blog: skillsync.wiki/blogRecommendations

-

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for skillsync.wiki -

robots.txt not accessible (HTTP 404) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for skillsync.wiki -

llms.txt not found (HTTP 404) AEO

Create an llms.txt file so AI models can understand what skillsync.wiki does. Stobo generates one automatically.Generate an llms.txt for skillsync.wiki

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why can't search engines find my robots.txt file?

- Your robots.txt file returns a 404 error, meaning it doesn't exist or isn't accessible. Search engines expect this file at yoursite.com/robots.txt to understand crawling permissions. Create and upload a proper robots.txt file to your root directory.

- What does it mean when my site has no date signals?

- Your pages lack publication or update dates that search engines can detect. This hurts freshness scoring for both traditional SEO and AI systems. Add structured data with dates, visible timestamps, or proper HTML date markup.

- Do I need an llms.txt file for AI optimization?

- Your site is missing llms.txt, which helps AI systems understand your content policies and preferences. While not required, this file is becoming important for AI optimization. Create one to guide how AI models interact with your content.

- How does missing freshness data affect my search rankings?

- Without date signals, search engines can't determine content freshness, which impacts your E-E-A-T scoring. Fresh, updated content ranks better than stale pages. Add publication dates and last-modified information to show your content is current and maintained.

- Why are my page openings too short for AI systems?

- Your opening paragraphs contain only 12 words, which is insufficient for AI systems to understand your content context. Expand your introductions to 50-100 words with clear, descriptive text that summarizes your page's main topic and purpose.