Sciloop SEO & AI Visibility Audit

Sciloop is an AI platform that automates end-to-end machine learning research workflows. Researchers use it to test ideas, manage experiments, and iterate on models without handling infrastructure, enabling faster validation and deployment of ML projects.

sciloop.dev

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 75% | C |

| AEO (AI Visibility) | 15% | F |

| E-E-A-T | 42% | F |

| Combined | 45% | — |

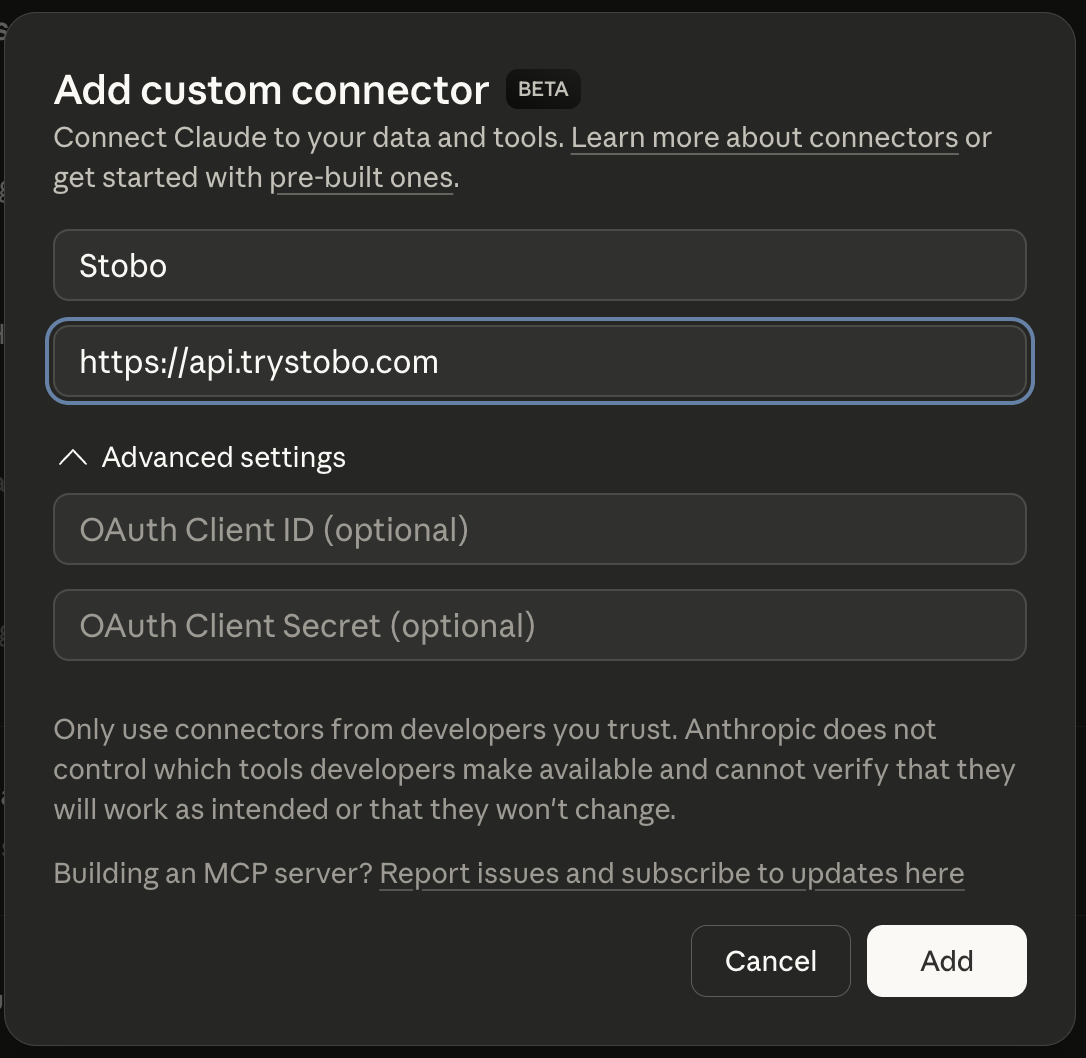

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 46% | No about/team page, social profiles, or LinkedIn links detected, No privacy policy link detected, 6 security headers missing, No terms of service link detected |

| Expertise | 80% | All checks passing |

| Authoritativeness | 20% | No Organization JSON-LD schema found, No JSON-LD schema types detected, No third-party review or trust signals detected |

| Experience | 19% | Found, Freshness issues, Found 4 headings, No first-hand experience signals detected |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: sciloop.devstobo this article: sciloop.dev/blog/my-articleGenerate a robots.txt for sciloop.devstobo my blog: sciloop.dev/blogRecommendations

-

robots.txt not accessible (HTTP 404) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for sciloop.dev -

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for sciloop.dev -

llms.txt not found (HTTP 404) AEO

Create an llms.txt file so AI models can understand what sciloop.dev does. Stobo generates one automatically.Generate an llms.txt for sciloop.dev

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why isn't my site's llms.txt file accessible to AI crawlers?

- Your site returns a 404 error when AI systems look for the llms.txt file. This file helps AI crawlers understand how to interact with your content. You need to create and upload an llms.txt file to your root directory to fix this issue.

- What does it mean when robots.txt returns a 404 error?

- Your robots.txt file is missing or inaccessible, returning a 404 error. This file tells search engines and AI crawlers which parts of your site they can access. You should create a robots.txt file in your root directory.

- How do missing date signals hurt my site's search performance?

- Your pages lack publication or update dates, making it hard for search engines to assess content freshness. This impacts rankings for time-sensitive topics. Add visible publication dates and last-modified timestamps to your pages to improve this signal.

- Why is content freshness critical for my site's authority?

- Search engines can't determine when your content was created or updated because date signals are missing. This hurts your site's expertise and trustworthiness signals. Add clear publication and update dates to demonstrate your content's currency and reliability.

- Should I add FAQ schema markup to my site pages?

- Your site currently has no FAQ schema markup, which means search engines miss opportunities to show rich snippets. Adding FAQPage structured data helps your content appear in featured snippets and voice search results, improving visibility.