Parse SEO & AI Visibility Audit

Parse is a web automation API that reverse-engineers any website into a structured REST endpoint. It enables developers and AI agents to read data, fill forms, book reservations, and extract pricing from any site without code or browser automation, charged per API call.

parse.bot

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 80% | B |

| AEO (AI Visibility) | 24% | F |

| E-E-A-T | 60% | F |

| Combined | 52% | — |

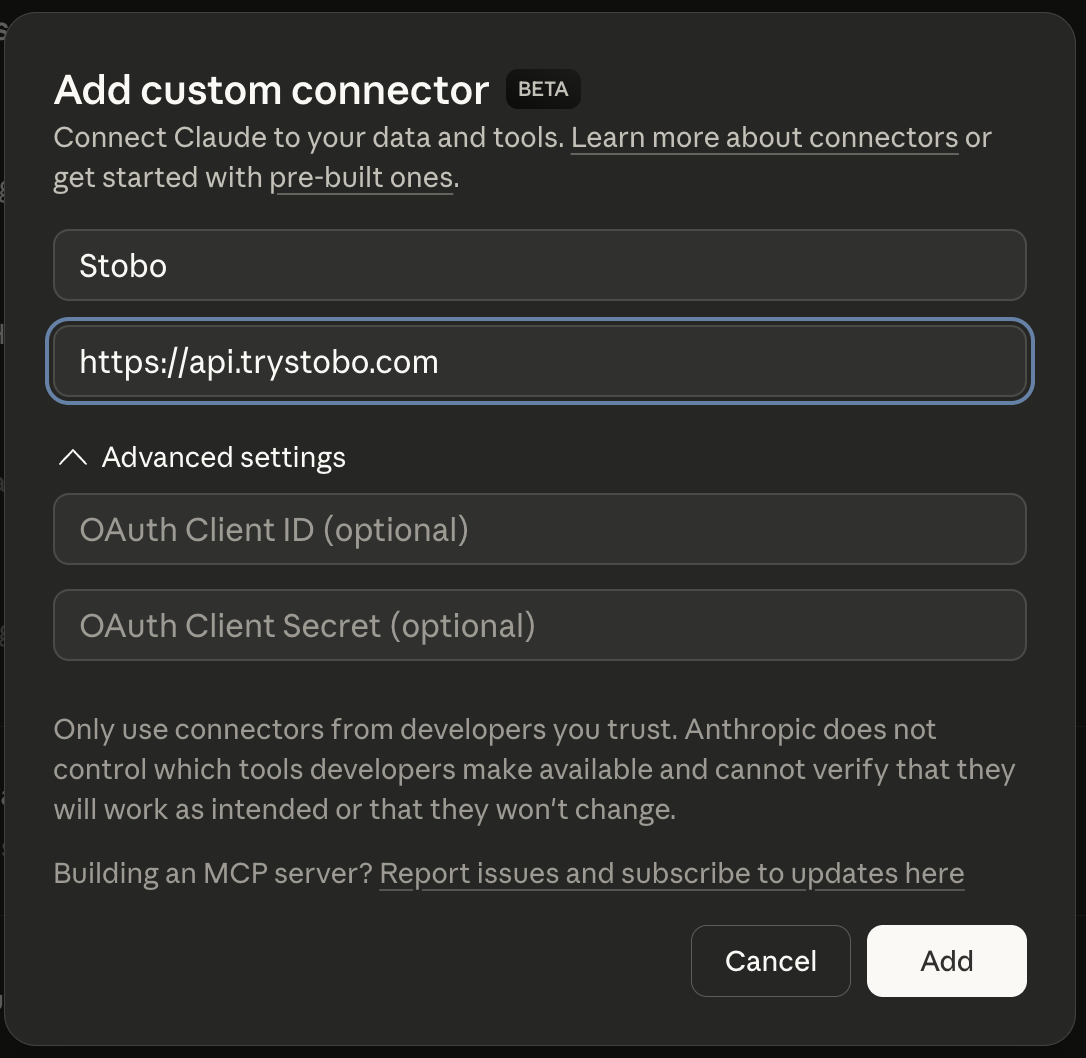

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 71% | No about/team page, social profiles, or LinkedIn links detected, 5/7 security headers present, 1/4 contact signals found |

| Expertise | 93% | All checks passing |

| Authoritativeness | 34% | No Organization JSON-LD schema found, No third-party review or trust signals detected |

| Experience | 24% | No social proof signals detected, Freshness issues, Found |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: parse.botstobo this article: parse.bot/blog/my-articleGenerate a robots.txt for parse.botstobo my blog: parse.bot/blogRecommendations

-

robots.txt not accessible (HTTP 404) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for parse.bot -

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for parse.bot -

Freshness issues: no date signals found E-E-A-T

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why can't search engines find when your content was published?

- Your site lacks date signals like publication dates or last-modified timestamps. Search engines can't determine content freshness without these signals. Add visible publication dates and structured data timestamps to help search engines understand when your content was created or updated.

- What happens when your robots.txt file returns a 404 error?

- Your robots.txt file is inaccessible, returning HTTP 404 errors. This prevents search engines and AI crawlers from understanding your crawling preferences. Create a valid robots.txt file at your domain root to guide how bots should crawl your site.

- How does missing FAQ schema affect your search visibility?

- Your site lacks FAQ Schema markup, missing opportunities for rich snippets in search results. FAQ structured data helps search engines display your Q&A content directly in search results. Add FAQPage markup to eligible content to enhance visibility.

- Why do you need social proof signals on your website?

- Your site has no detectable social proof signals like testimonials, reviews, or social media mentions. These signals help establish credibility and trustworthiness with both users and search engines. Add customer testimonials, review widgets, or social media integration.

- How does content without dates impact your SEO performance?

- Missing publication and update dates hurt your content's search performance. Search engines favor fresh, recently updated content for many queries. Add visible dates and implement proper date markup to signal content freshness and improve your search rankings.