Hypercubic SEO & AI Visibility Audit

Hypercubic is an agentic AI platform for mainframe modernization that captures and preserves institutional knowledge from legacy systems and subject matter experts. It provides digital knowledge twins and auto-updating documentation for COBOL and mainframe operations, helping enterprises reduce downtime risk and accelerate system modernization.

hypercubic.ai

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 84% | B |

| AEO (AI Visibility) | 36% | D |

| E-E-A-T | 65% | D |

| Combined | 60% | — |

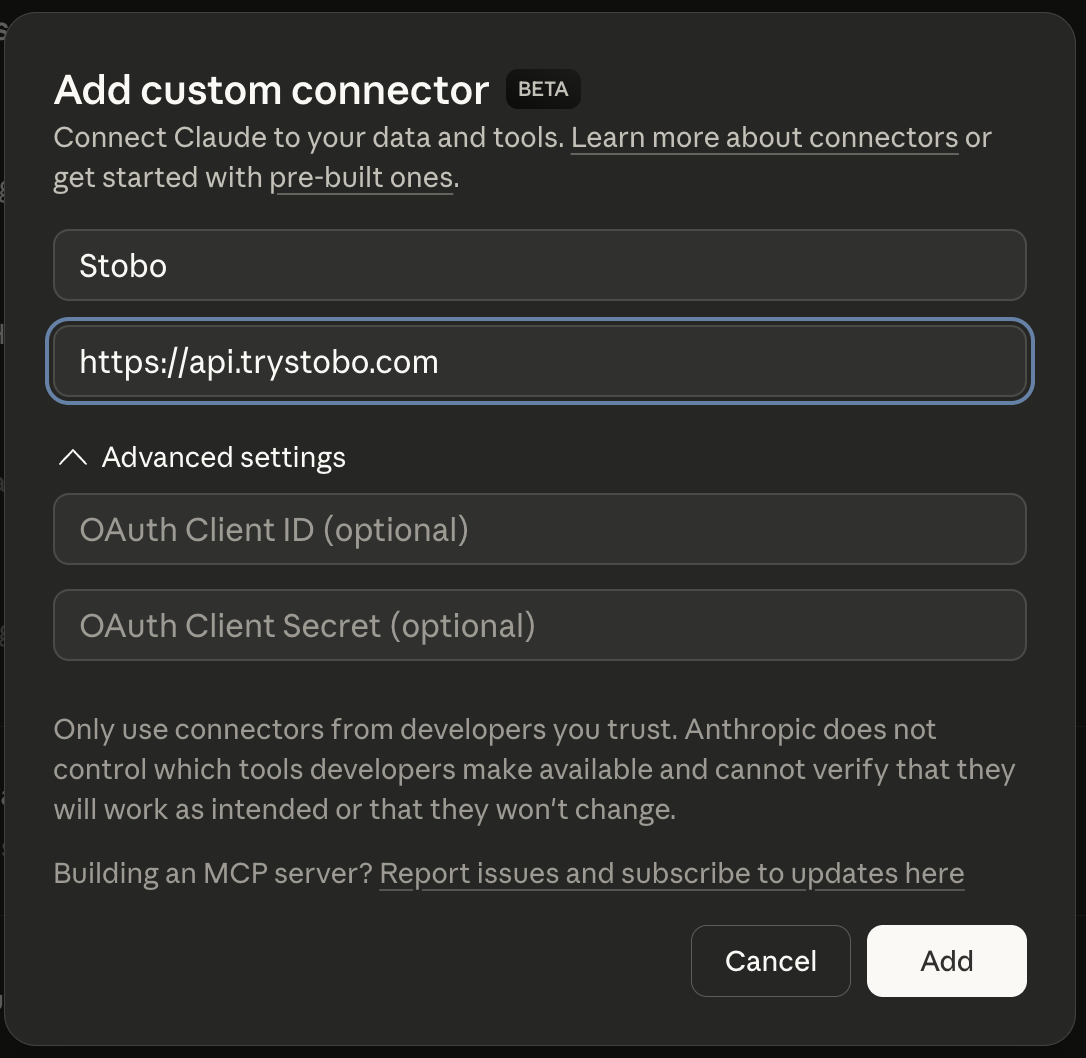

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 63% | Found, 6 security headers missing, 1/4 contact signals found |

| Expertise | 80% | All checks passing |

| Authoritativeness | 69% | Found |

| Experience | 33% | Found, Freshness issues, Hierarchy issues, Found |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: hypercubic.aistobo this article: hypercubic.ai/blog/my-articleGenerate a robots.txt for hypercubic.aistobo my blog: hypercubic.ai/blogRecommendations

-

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for hypercubic.ai -

llms.txt not found (HTTP 404) AEO

Create an llms.txt file so AI models can understand what hypercubic.ai does. Stobo generates one automatically.Generate an llms.txt for hypercubic.ai -

Freshness issues: no date signals found E-E-A-T

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why can't AI crawlers find my llms.txt file on hypercubic.ai?

- Your site returns a 404 error when AI crawlers look for the llms.txt file. This file helps AI systems understand how to interact with your content. You need to create and upload an llms.txt file to your root directory to fix this issue.

- How do search engines know when my content was published or updated?

- Your site lacks date signals that search engines use to determine content freshness. Add publication dates, last modified dates, or structured data timestamps to your pages. This helps search engines understand how current your content is.

- What happens when my site doesn't show content publication dates?

- Search engines can't determine if your content is fresh or outdated without date signals. This hurts your rankings for time-sensitive queries. Add visible dates or structured data markup to show when content was created or updated.

- Should I add FAQ schema markup to my hypercubic.ai pages?

- Yes, your site currently has no FAQ schema markup. Adding FAQPage structured data helps search engines display your questions and answers directly in search results. This can increase your click-through rates and visibility.

- Are my images slowing down my site's loading speed?

- Your site has 18 images that need optimization. Large, unoptimized images slow page loading and hurt user experience. Compress your images, use modern formats like WebP, and add proper alt text to improve performance.