Deeptrace SEO & AI Visibility Audit

Deeptrace is an AI-powered incident response platform that automatically investigates engineering alerts and incidents. It analyzes logs and code with semantic understanding to identify root causes, helping on-call teams reduce investigation time and resolve issues faster.

deeptrace.com

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 76% | B |

| AEO (AI Visibility) | 2% | F |

| E-E-A-T | 40% | F |

| Combined | 38% | — |

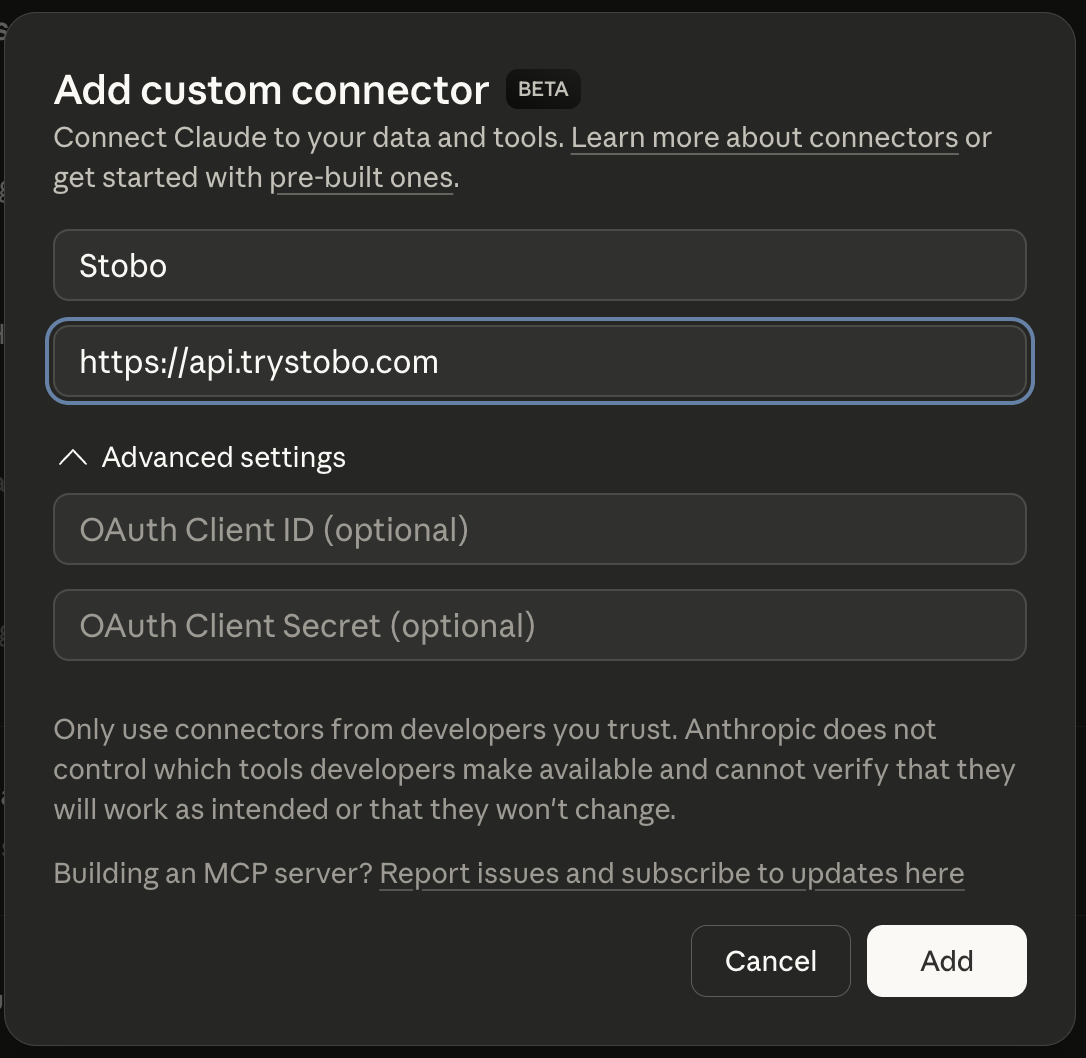

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 43% | No about/team page, social profiles, or LinkedIn links detected, No privacy policy link detected, 6 security headers missing, No terms of service link detected, 1/4 contact signals found |

| Expertise | 60% | 259 words, 9 headings, 20 outbound links |

| Authoritativeness | 29% | No Organization JSON-LD schema found, No JSON-LD schema types detected, Found |

| Experience | 27% | Found, Freshness issues, Hierarchy issues, No first-hand experience signals detected |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: deeptrace.comstobo this article: deeptrace.com/blog/my-articleGenerate a robots.txt for deeptrace.comstobo my blog: deeptrace.com/blogRecommendations

-

robots.txt not accessible (HTTP 404) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for deeptrace.com -

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for deeptrace.com -

llms.txt not found (HTTP 404) AEO

Create an llms.txt file so AI models can understand what deeptrace.com does. Stobo generates one automatically.Generate an llms.txt for deeptrace.com

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- What is llms.txt and why does my site need one?

- Your site is missing an llms.txt file, which helps AI systems understand your content better. This file tells language models how to interpret and use your website's information. Without it, AI tools may misunderstand or ignore your content when generating responses.

- Why can't search engines find my robots.txt file?

- Your robots.txt file returns a 404 error, meaning it's missing or inaccessible. Search engines expect this file to understand crawling permissions. Without a proper robots.txt, crawlers may not index your site effectively or may waste resources on unwanted pages.

- How do missing date signals hurt my search rankings?

- Your content lacks publication or update dates, which search engines use to determine freshness. Google favors recent, relevant content for many queries. Without date signals, your pages may rank lower, especially for topics where timeliness matters to users.

- What does it mean when my site has no opening content?

- Your pages lack clear introductory content that directly answers user queries. Search engines look for immediate, relevant information at the start of pages. Missing opening content reduces your chances of appearing in featured snippets and answer boxes.

- How can I fix content freshness issues on my website?

- Add clear publication and last-updated dates to your content using proper HTML markup. Include timestamps in your page structure and consider adding modification dates to your sitemap. Regular content updates with visible dates signal freshness to search engines.