Vercel Security Checkpoint SEO & AI Visibility Audit

Vercel Security Checkpoint is a security scanning platform that protects web applications deployed on Vercel's infrastructure. It automatically scans deployments for vulnerabilities, misconfigured access controls, and compliance issues, helping teams identify and remediate security risks before code reaches production.

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 58% | D |

| AEO (AI Visibility) | 2% | F |

| E-E-A-T | 24% | F |

| Combined | 30% | — |

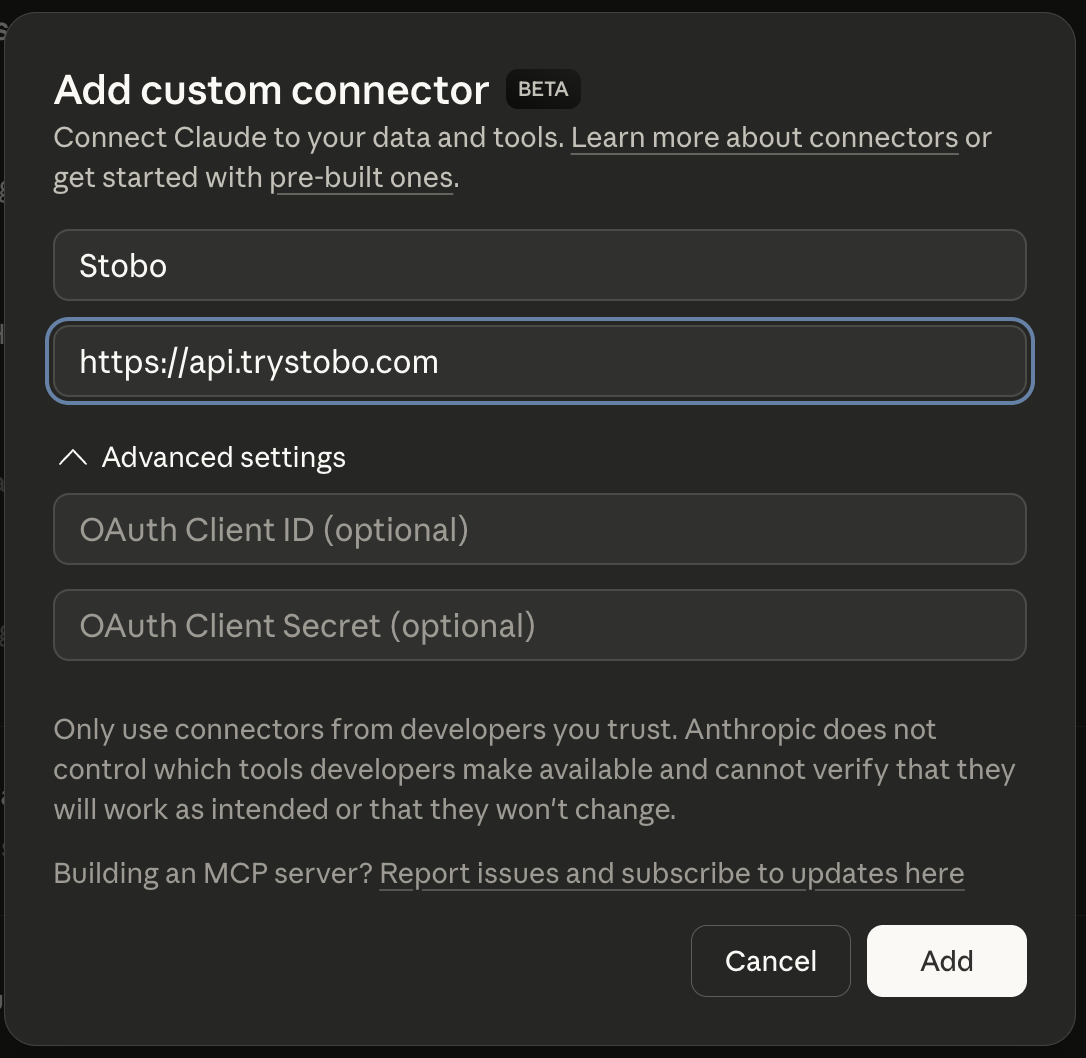

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 31% | HTTPS properly configured, No about/team page, social profiles, or LinkedIn links detected, No privacy policy link detected, 7 security headers missing, No terms of service link detected, 1/4 contact signals found |

| Expertise | 27% | 20 words, 0 headings, 1 outbound links |

| Authoritativeness | 20% | No Organization JSON-LD schema found, No JSON-LD schema types detected, No third-party review or trust signals detected |

| Experience | 0% | No social proof signals detected, Freshness issues, No headings found on page, No first-hand experience signals detected |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: caretta.sostobo this article: caretta.so/blog/my-articleGenerate a robots.txt for caretta.sostobo my blog: caretta.so/blogRecommendations

-

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for caretta.so -

No opening content found AEO

Add a direct answer in the first 40-60 words of the page. AI engines pull from the opening paragraph.stobo this site: caretta.so -

robots.txt not accessible (HTTP 429) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for caretta.so

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why doesn't my site show any publication dates or freshness signals?

- Your site has no date signals anywhere on your pages. Search engines can't tell when your content was published or updated. Add publication dates, last modified timestamps, or structured data with date information to help search engines understand your content's freshness.

- What does it mean when there's no opening content found on my pages?

- Your pages lack clear introductory content that directly answers user queries. Search engines need opening paragraphs that immediately address what users are looking for. Add concise, answer-focused introductions at the top of your pages to improve visibility.

- Why can't search engines access my robots.txt file properly?

- Your robots.txt file returns an HTTP 429 error, meaning it's being rate-limited or blocked. This prevents search engines from understanding your crawling preferences. Check your server configuration and ensure robots.txt is accessible without restrictions or rate limiting.

- Should my website have an llms.txt file for AI crawlers?

- Your llms.txt file is missing or inaccessible due to HTTP 429 errors. This file helps AI systems understand how to interact with your content. Create an llms.txt file with clear guidelines for AI crawlers and ensure it's accessible.

- How do missing date signals hurt my site's search performance?

- Without date signals, search engines can't assess your content's relevance or freshness. This hurts rankings for time-sensitive queries and reduces trust signals. Add visible publication dates, update timestamps, and structured data to show when content was created or modified.