Beesafe Ai SEO & AI Visibility Audit

BeeSafe AI is a fraud prevention platform that stops scams by engaging directly with fraudsters to identify and intercept their operations. It uses anti-scam agents to detect fraudulent accounts in real-time, disrupting trust-based scams before they reach victims across financial services and other sectors.

SEO + AEO + E-E-A-T combined

Overall Scores

| Area | Score | Grade |

|---|---|---|

| SEO | 73% | C |

| AEO (AI Visibility) | 2% | F |

| E-E-A-T | 43% | F |

| Combined | 37% | — |

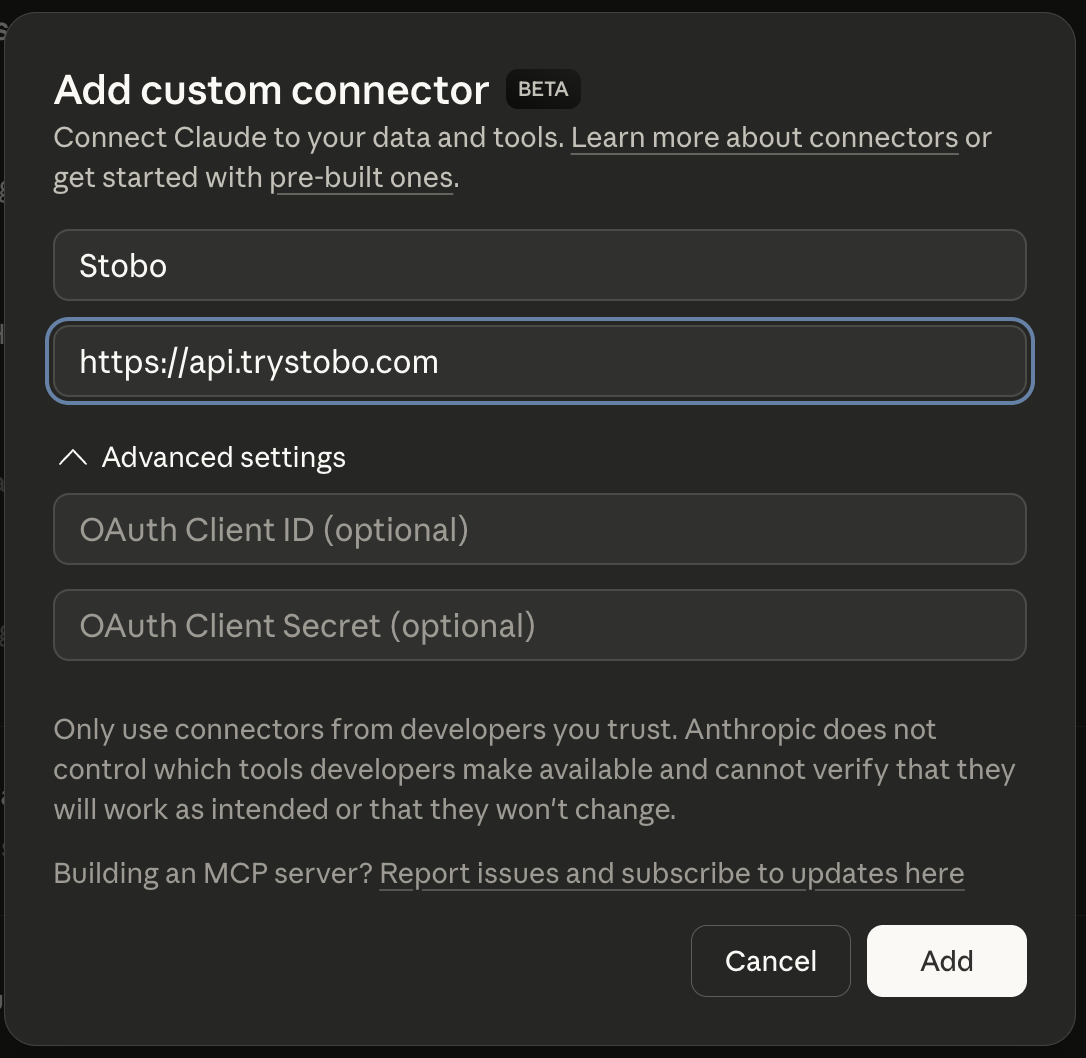

Fastest way to fix your site is to use our Custom Connector for Claude Desktop, or other IDEs.

E-E-A-T Breakdown

| Signal | Score | Issue |

|---|---|---|

| Trustworthiness | 56% | No about/team page, social profiles, or LinkedIn links detected, 6 security headers missing, No terms of service link detected |

| Expertise | 47% | 399 words, 22 headings, 0 outbound links |

| Authoritativeness | 29% | No Organization JSON-LD schema found, No JSON-LD schema types detected, Found |

| Experience | 29% | Found, Freshness issues, Found |

Stobo finds what's broken and generates the fixes

Try these in Claude Desktop

stobo my site: beesafe.aistobo this article: beesafe.ai/blog/my-articleGenerate a robots.txt for beesafe.aistobo my blog: beesafe.ai/blogRecommendations

-

Freshness issues: no date signals found AEO

Add date markup (datePublished, dateModified) so AI engines know content is current. Stobo generates the JSON-LD.Generate freshness code for beesafe.ai -

No opening content found AEO

Add a direct answer in the first 40-60 words of the page. AI engines pull from the opening paragraph.stobo this site: beesafe.ai -

robots.txt not accessible (HTTP 404) AEO

Update robots.txt to allow AI crawlers like GPTBot and ClaudeBot. Stobo generates an AI-friendly version.Generate a robots.txt for beesafe.ai

Track your score over time

We'll re-audit your site in 7 days and email you what changed. Free, automatic.

Embed your audit badge

Show your score on your README or website.

Frequently asked questions

- Why doesn't my site show when content was published or updated?

- Your site lacks date signals that search engines use to determine content freshness. Pages without publication dates, last modified dates, or schema markup appear outdated to both users and search engines, hurting your rankings and click-through rates.

- What happens when my robots.txt file returns a 404 error?

- Your robots.txt file isn't accessible, returning a 404 error instead. This prevents search engines from understanding which pages to crawl and index. Without proper robots.txt guidance, crawlers may waste resources on unimportant pages or miss critical content.

- How does missing llms.txt affect my site's AI visibility?

- Your site lacks an llms.txt file, which AI systems use to understand crawling permissions and content usage guidelines. This missing file means you have no control over how AI models access and potentially use your content for training or responses.

- Why can't search engines find direct answers on my pages?

- Your pages lack clear opening content that directly answers user queries. Search engines look for immediate, relevant responses at the beginning of content to feature in snippets and answer boxes. Without this structure, you miss valuable visibility opportunities.

- How do freshness issues impact my search engine rankings?

- Search engines can't determine when your content was created or updated due to missing date signals. This makes your pages appear stale and less trustworthy, leading to lower rankings for queries where freshness matters, especially in competitive topics.